We’re turning two successful AI engineering workshops into a scalable, productized coaching service. This is the story of how we’re validating every step. Pretotyping methodology, real experiment data, and the kind of failures most companies don’t talk about.

Most guides on productizing a service start with pricing tiers and delivery frameworks. This one starts with a confession: we cold-messaged 96 CTOs and got zero replies. If you’re in the messy early stage of turning expertise into a repeatable product, still figuring out if anyone will pay; this is for you.

The problem nobody’s naming

AI coding tools have crossed the mainstream threshold. 72% of developers who try them use them daily. Teams are shipping faster than ever.

But here’s what the vendor pitch decks don’t show: the bottleneck didn’t disappear. It moved.

Developers using AI complete 21% more tasks and merge 98% more pull requests. Great. Until you see the other side: PR review time increases by 91%. LinearB’s 2026 analysis of 8.1 million pull requests across 4,800 engineering teams found that developers feel 20% faster but are actually 19% slower. Jeez, let me write this again.

You can feel faster, but actually you’re slower.

The work shifted from writing code to verifying it.

We heard this firsthand. In a structured interview with a team lead at a Bulgarian software company, the severity was striking.

His team had 13 tasks waiting for review in a single week. End-to-end and frontend tests couldn’t keep up with the pace of AI-generated code. I’m not talking about the coverage-padded tests but about actual business logic tests.

When we asked about TDD, his honest answer was: “I’m just not sure if we do or do not do TDD”.

This isn’t a skill issue. It’s a structural one. AI output velocity has outpaced the team’s ability to review and test it.

96% of developers don’t fully trust AI-generated code accuracy.

Yet 59% rate the effort to review it as “moderate” or “substantial”.

There are 1.7x more issues in AI-coauthored PRs versus human-written ones (CodeRabbit’s analysis of 470 real-world pull requests).

There is no “AI SDLC.” Most teams are winging it.

Why a cooperative is productizing this (and why we didn’t build it yet)

I should explain how we work, because it shapes everything about this story.

Camplight is a tech cooperative. 20+ co-owners, no investors, no boss. We follow the unFIX model: I’m part of the “Partner Success” team that does enablement for value-aligned solution teams. But the relevant part for this story is our Product Forum.

Every month, the Product Forum, a cross-team initiative, chooses one internal venture to invest in. We put in 10-30% equity worth of our time and help with validation: go-to-market, growth, research, strategy. The key word is validation. We don’t build products on gut feeling. We pretotype them.

Pretotyping, from Alberto Savoia’s framework, means testing whether anyone wants the thing before you build the thing. You define an XYZ hypothesis (“at least X% of Y will Z”), set a minimum success threshold, run the cheapest possible experiment, and let the data decide.

This is the story of how we’re productizing Cognitive Rebase. We’re turning two successful AI engineering workshops into a scalable coaching service. The workshops were created by two of our co-owners, Tsvetan Tsvetanov and followed by Martin Martinov.

Tsvetan and Martin built two workshops for DEV.BG Grow: “Beyond Vibe Coding” and “Legacy Code with AI.” They sold tickets, ran courses, got real attendees. Sopra Steria Bulgaria’s CTO bought 20 tickets for his team. E-Comprocessing’s CTO came back for both courses. Engineers from Commerzbank, HedgeServ, Accedia, and OfficeRnD signed up individually.

Six companies. Real money. Real signal.

The Product Forum picked Cognitive Rebase as our venture to validate. My job: figure out if this demand is scalable, or if those 6 companies were a lucky cluster.

The future of learning is personalization

A few weeks ago I listened to Joe Liemandt on The Knowledge Project podcast. Liemandt is the principal of Alpha School. It’s a school where students spend two hours a day on AI-personalized instruction and score in the top 1% on standardized tests.

Two hours. Top 1%.

The math is staggering: a full grade level of material takes about 20 hours to master. A kid who’s three years behind can catch up in 60 hours. Not because the kids are smarter, but because the AI tutor adapts to each student. It finds their gaps, adjusts difficulty, and moves on only when mastery is proven. No sitting through lessons they already know. No falling behind because the class moved on.

The insight that stuck with me: AI isn’t replacing the teacher. It’s personalizing the path. The teacher is still there for mentoring, life skills, the human stuff. But the knowledge transfer? That’s 10x more efficient when it’s personalized.

Now apply that to engineering teams.

Today, most AI training for developers is a workshop. Everyone sits in the same room, hears the same material, does the same exercises. The engineer who’s great at prompting but terrible at testing AI output gets the same session as the one who writes good tests but doesn’t know how to structure context for the model. Two days later, retention drops. A month later, most have reverted to old habits. You have no way to tell which is which without asking.

What if instead, each engineer got a personalized AI capability assessment. Their specific gaps identified, a coaching plan generated for them, and weekly reports tracking whether they’re actually improving?

That’s the shift: from time-based (everyone sits for 8 hours) to mastery-based (you’re done when you’ve learned it). From content delivery to continuous coaching. Workshops don’t scale. Personalized AI coaching does, because AI makes the personalization layer economically viable for the first time.

We’ve already started building this. We took Tsvetan Tsvetanov, one of our trainers who built both workshops, and cloned his teaching methodology into AI-powered personalized learning paths. Each path adapts to the engineer’s current skill level and focuses on their specific gaps. Instead of a generic 8-hour session, the AI version of Tsvetan meets each person where they are.

Here’s an example. This is a learning path on context bankruptcy, one of the core concepts from the workshop, delivered as a personalized micro-lesson:

We actually cloned Tsvetan so we can do all kinds of crazy stuff, like this trailer 😀 Is cloning the future of teaching and coaching? Can we scale people’s “avatars” to 500-1000 1:1 trainings?

That’s what Cognitive Rebase is building toward. But we’re not there yet. First, we need to know if engineering leaders will pay for it.

Experiment 1: Cold outreach to 96 Bulgarian CTOs

Our first experiment was straightforward: find CTOs at signal-active Bulgarian tech companies and pitch them directly via LinkedIn.

The setup:

- 193 contacts from Apollo (CTOs, VPs Eng, Heads of Engineering in Bulgaria)

- Filtered by growth signals: rapid growth, recent funding, new products, new partnerships

- Cleaned to 96 after removing non-tech and enterprise contacts

- 3-step LinkedIn sequence via We-Connect (in Bulgarian)

- XYZ hypothesis: “At least 5% will reply within 14 days”

The result: zero replies. Not one.

I was genuinely surprised. The targeting was signal-based. These weren’t random CTOs. They were at companies actively growing, hiring engineers, launching new products. The exact profile where AI code quality should matter most.

Looking back, the message was too generic. We said “AI training” but didn’t connect it to anything specific about their company or their pain. It was indistinguishable from the 50 other LinkedIn messages a CTO gets every week. We had real social proof, a CTO who bought 20 tickets, and we didn’t use it. We led with a category (“AI engineering training”) instead of a result (“Sopra Steria’s CTO trained his entire team through us”).

Lesson: when you’re productizing a service, your credibility IS the product. A cold message from someone the CTO has never heard of is just noise. No matter how good your targeting is.

Experiment 2: Reddit problem validation

After the CTO outreach failed, we shifted from pushing a solution to validating the problem.

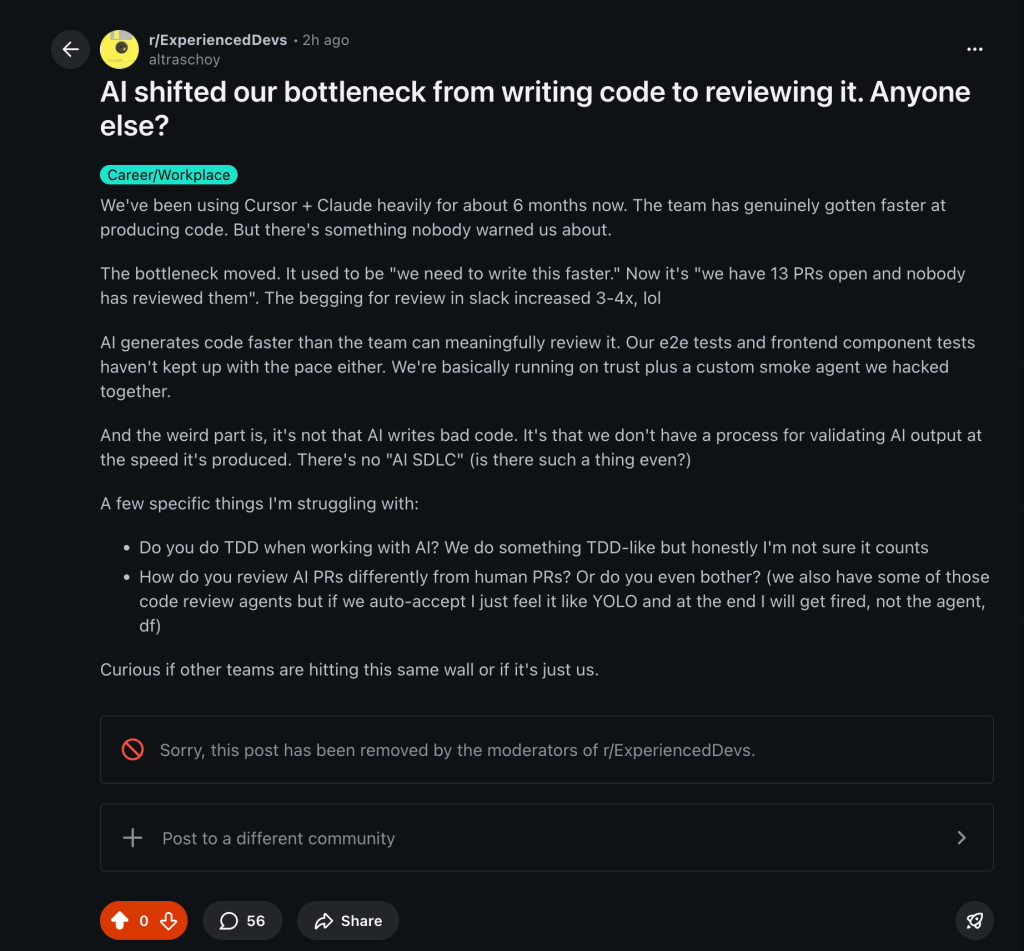

We designed 10 Reddit posts for engineering subreddits: r/ExperiencedDevs, r/programming, r/webdev, r/CTO, r/agile, r/devops. Each framing a real pain point from our customer interview:

- “AI shifted our bottleneck from writing code to reviewing it. Anyone else?”

- “The real cost of AI coding tools isn’t hallucinations, it’s the review backlog”

- “TDD in an AI-first workflow: does anyone actually do this, or are we all winging it?”

Zero links to Cognitive Rebase. Zero selling. Posted from a personal dev account. The replies are the signal.

XYZ hypothesis: at least 3 out of 10 posts generate 20+ substantive comments confirming the problem.

The results: inconclusive, leaning validate

We published 5 of the 10 planned posts. The other 5 never went up. Here’s what happened with the ones that did.

The r/ExperiencedDevs post (“AI shifted our bottleneck from writing code to reviewing it”) got 55 comments before moderators removed it. Twice. That’s our strongest signal. The “review bottleneck” framing triggered real discussion from experienced engineers. One commenter described setting up mandatory code review templates specifically for AI-assisted work. Another said this pattern shows up on every team once they adopt AI tools.

r/webdev was hostile. 25% upvote ratio, 26 comments, roughly 60% pushback. The dominant response was “just use AI to write tests too.” The testing gap framing got dismissed as a skill issue, not a structural problem. One commenter with 27 upvotes did validate the core concern: code generated in hours with no test coverage is a recipe for disaster. But the audience overall wasn’t interested in process discussions.

r/ycombinator (an unplanned addition, replacing r/CTO) was small but receptive. 4 upvotes, 4 comments, 100% upvote ratio, zero hostility. The startup and founder audience engaged constructively with the “no AI methodology” framing.

r/agile was dead. 2 upvotes, 3 comments, dismissive tone. Not the right audience for this.

What the Reddit data says after combined 20k views

The MEH scorecard came back mixed. Two posts hit 20+ comments (r/ExperiencedDevs at 55, r/webdev at 26), but one was removed and the other was mostly hostile. Zero DMs received. “Me too” replies came in below threshold at 3-4 per qualifying post instead of the 5+ we needed.

But the signal underneath the noise is clear. “Review bottleneck” resonates. The framing that AI output velocity has outpaced review capacity gets engineers talking. When we led with the testing angle instead, it got dismissed.

The audience matters more than the framing. Founders and CTOs engaged constructively (r/ycombinator). Individual contributors on craft-focused subs (r/webdev) got defensive. This maps directly to our buyer personas: Persona A (Scaling CTO) is more receptive on Reddit than Persona C (Self-Investing Dev).

We can’t call this validated or killed with only 50% of the experiment executed. But the direction is clear: lead with the review bottleneck, target founder and leadership subs, and finish the remaining posts.

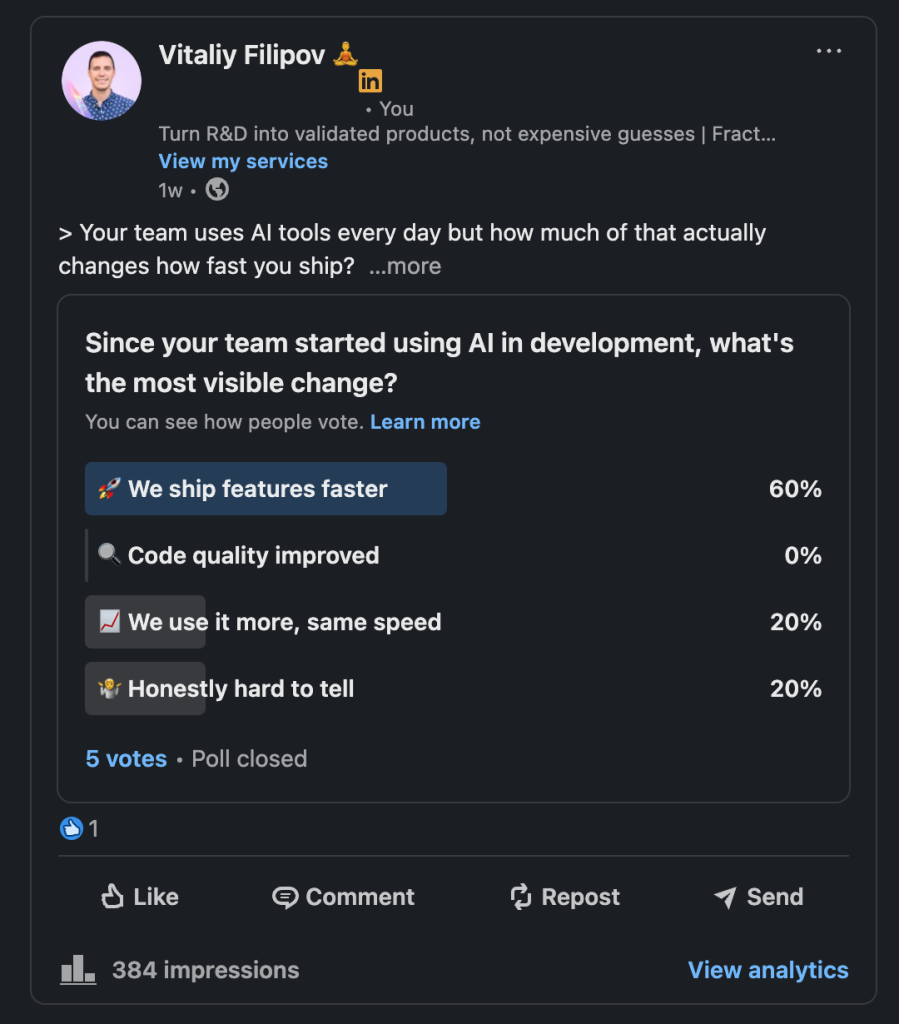

Experiment 3: LinkedIn polls

We also designed a 13-poll LinkedIn campaign. Each poll was adapted from a customer interview question, progressing through a funnel:

- Posts 1-4 (Problem recognition): “Since your team started using AI, what’s the most visible change?”

- Posts 5-8 (Solution awareness): “Per-engineer AI coaching insights; useful or creepy?”

- Posts 9-13 (Buying signal): “What would make AI training go from ‘we should’ to ‘we need this now’?”

XYZ hypothesis: at least 3% of engineering leaders who see any poll will cast a vote.

Experiment 4: Personalized ABM pages

Our current experiment takes the opposite approach from the CTO mass outreach: high effort, low volume.

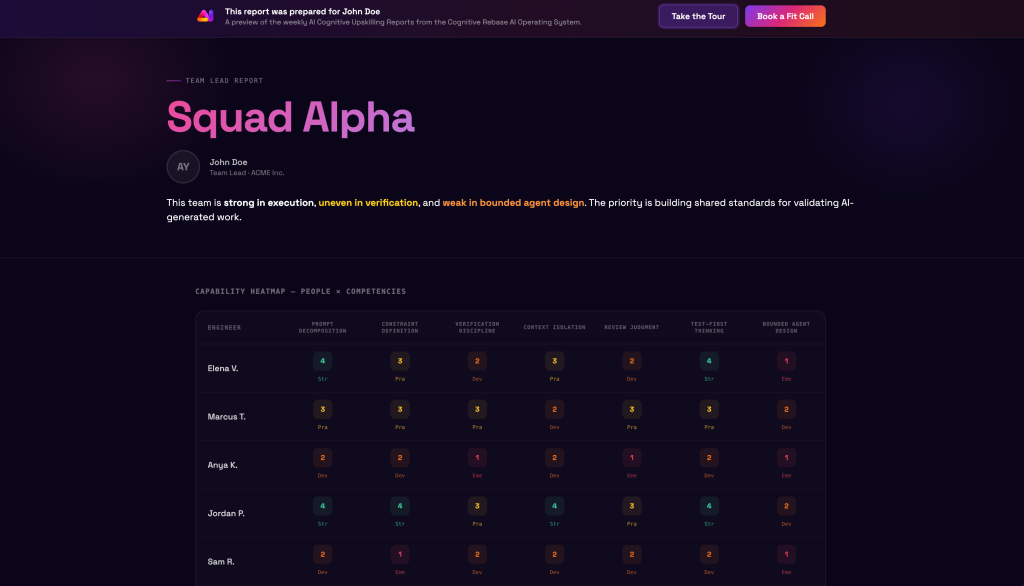

Instead of messaging 96 people with a generic pitch, we pick one company, deep-research their team, and build a personalized AI Capability Report page for each engineer. Each page includes their name, photo, title, and a static example of what weekly coaching reports would look like.

Each person gets a unique URL. The page includes a guided tour explaining each section and a “Book a Fit Call” CTA. We enriched contacts via Apollo’s API, downloaded LinkedIn photos, and automated the entire pipeline. One CLI command does everything from search to deploy.

Our honest probability estimate: 5-10% chance of getting 1 fit call from ~20 contacts. The funnel math is brutal: 20 → ~5 accept connection → ~2 click → maybe 1 acts.

So why run it? Because the CTO experiment proved that low-effort, high-volume doesn’t work for us. If ABM works even once, the playbook is repeatable: research, enrich, personalize, deploy, outreach. And unlike the generic campaign, each touchpoint demonstrates the product’s core value: personalization.

What we’ve learned so far

1. The problem is real, and Reddit confirmed it. Every engineer we talk to recognizes it. AI output is outpacing review and testing capacity. The data confirms it at scale: 1.7x more issues in AI PRs, 91% longer reviews, 96% don’t trust AI code. When we posted “AI shifted our bottleneck from writing code to reviewing it” on r/ExperiencedDevs, 55 engineers showed up to talk about it before moderators shut it down.

2. Cold outreach doesn’t work without proof. 0 from 96. The message was generic, had no social proof, and sounded like every other LinkedIn pitch. The validated buyers we DO have, six companies who paid real money, all came through a warm channel (DEV.BG).

3. The buyer exists, but they find you. You don’t find them (yet). Our paying customers are 100-500 employee Bulgarian companies, split between IT services/nearshore and fintech/banking. CTOs buy in bulk, senior devs self-invest. The pattern is clear. The distribution channel isn’t.

4. Bottom-up beats top-down, but audience selection matters. We’re pivoting from targeting CTOs to targeting team leads and senior ICs. The people who feel the review bottleneck daily. Reddit taught us something more specific: founder and CTO communities (r/ycombinator) engage constructively with process problems, while craft-focused developer communities (r/webdev) dismiss them as skill issues. Same problem, same framing, completely different reception.

5. Personalization is the differentiator; for the product AND the go-to-market. Just like Alpha School’s AI tutor generates a personalized learning path per student, Cognitive Rebase’s value is personalized capability coaching per engineer. The marketing should mirror the product: a personalized report page is more compelling than a generic pitch email.

6. Lead with “review bottleneck,” not “testing gap.” The Reddit experiment made this hierarchy clear. When we frame the problem as “AI output velocity has outpaced review capacity,” people share their own stories. When we frame it as “tests can’t keep up,” people say “just use AI for tests too” and move on. The testing gap is real, but it lands better as a consequence of the review bottleneck, not as the headline.

What’s next

We’re not done experimenting. The pretotyping mindset says: don’t fall in love with your solution, fall in love with the problem. Let the data decide.

Here’s what’s ahead:

- Finish the Reddit experiment: 5 of 10 posts are still unposted. We’re shifting them to friendlier subs (r/ycombinator, r/startups, r/softwaredevelopment, r/CodingWithAI) and leading every post with the review bottleneck framing

- Deploy the personalized ABM pages: test high-effort, low-volume against the 0-from-96 baseline

- Run the LinkedIn poll campaign: validate the problem publicly, build audience

- Pivot to bottom-up: target team leads and senior engineers who feel the pain, not CTOs who see it as noise

- Measure everything: connection accept rates, page visits, tour completions, replies, fit calls booked

If it works: scale the playbook. If it doesn’t: reframe and try again. That’s how a cooperative validates ventures. With discipline, not delusion.

We’re looking for 3 beta companies

If you’re an engineering leader at a company with 50-500 engineers and your team is using AI tools without a structured methodology, we want to talk to you.

Our first three pilot companies get the full Cognitive Rebase program at a significant discount in exchange for honest feedback and a case study. That means workshops led by engineers who’ve taught this at scale, plus personalized capability reports for your team.

The deal: you get structured AI engineering training at a fraction of the price. We get the data to build a better product.

Sources

- Sonar State of Code Developer Survey 2026: 96% don’t trust AI code, 59% rate review effort as moderate/substantial

- Stack Overflow Developer Survey 2025: 72% daily AI tool usage among adopters

- LinearB 2026 analysis: 8.1M pull requests, 39-point perception gap

- The Knowledge Project #228: Joe Liemandt on Alpha School