Three lead magnets, three customer interviews, and a WhatsApp number instead of a dashboard.

Most new product builds follow a familiar pattern: pick a stack, scope an MVP, build for three months, launch, and pray. Three months later, give up.

We’ve shipped enough of those at Camplight to know the failure mode. Three months in, the demo is polished and the architecture diagram is beautiful but the actual customers you intended to serve haven’t told you whether they want it.

By the time the first user touches the thing, the team has invested too much to admit the wedge was wrong. So you ship a product nobody asked for into a market that’s already too crowded, and you tell yourselves the next iteration will fix it.

We don’t do that anymore.

Internally, we build a new product almost every month. We keep a roadmap of a dozen ideas, score them, and pick one to focus on. We do this for ourselves and as a service for established businesses. The loop, shown below, runs in one-month sprints with hundreds of small experiments aimed at (in)validating a product hypothesis.

Chiefbase is the latest worked example of our validation-first methodology. One where we validate by pretotyping, ship the cheapest possible surfaces we can put in customer hands, and only build the next thing when the conversations demand it.

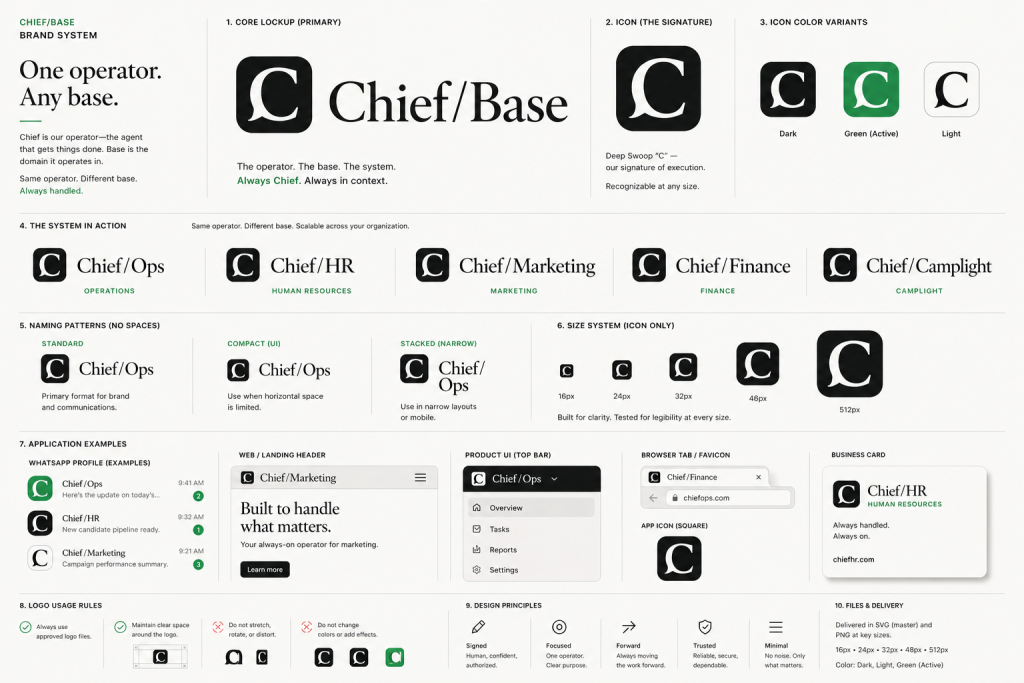

With Chiefbase in the first week, we ran a design sprint, created a brand system, and launched with lean canvases and all that jazz necessary to align the team.

Validation changed a lot with the advent of AI. Five years ago we’d build one prototype, one proof-of-concept, and call it done. Now we can “boil the ocean” — ship many cheap experiments in parallel, each a different beachhead bet, and let real engagement tell us which one is the real wedge.

Our latest experiment is one of those “try Chief before you talk to us” entry points we launched alongside the Chiefbase site to gather evidence of demand. This post is also an open notebook for how we got here. Let me explain.

How we validate AI products at Camplight

Step one isn’t a Figma file. It’s customer interviews. With AI we can do signal-based targeting. Unsexy, I know, but it works.

Chiefbase is a product bet to help HR, staffing & recruitment agencies become AI-native.

Before building anything customer-facing, we ran three open-ended conversations with operators in staffing and recruitment: one running a Sofia-based agency with eight recruiters, one a veteran who’d run his own placement company for over a decade, and one inside Camplight running our internal recruitment.

We weren’t trying to validate Chiefbase. We were trying to find out what was actually painful.

Three things surfaced that we would not have predicted from the outside:

1. Sourcing isn’t the bottleneck in this market. The veteran put it bluntly: “Ninety-five percent of effort is convincing people to apply. Five percent is matching.” In Bulgaria specifically, low unemployment means candidates rarely self-apply — every placement requires a real, somewhat-charming, human conversation. Any tool that automates “find me ten Java developers” misses the actual job. If we’d built sourcing-first, we’d have built the wrong product.

2. The pain that has recruiters lighting hours on fire is CV reformatting. A senior engineer with fifteen years of experience scored 42% on a client’s AI screening tool — not because they weren’t qualified, but because their CV wasn’t in the format the screener liked. The recruiter spent an hour reformatting it by hand. They do this every week. This is the kind of pain you only find by listening.

3. The reason in-ATS AI keeps failing isn’t model quality. It’s context starvation. A Reddit thread we ran in parallel surfaced the framing cleanly: recruiter judgment lives across four other surfaces.

- The LinkedIn profile in the next tab,

- yesterday’s email reply,

- the Slack DM from the hiring manager,

- the 1:1 notes from last week.

The platform AI is doing its job correctly with one-tenth the information the recruiter has. That’s why every recruiter we talked to said the same thing: “I use ChatGPT in another tab and ignore the platform AI.”

Three conversations, one Reddit thread. We already knew more than three months of building from a brief would have taught us.

There’s a term I really like called YODA: Your-Own-DatA. We don’t rely on Perplexity-style desk research because there’s a big gap between what generic AI summarises as “the market” and what we can actually reproduce as a success in our own delivery.

Three lead magnets to validate AI product demand

If the lesson is “AI needs to live where the recruiter already works,” the question becomes: how do we get something into their hands today without building a year of product first?

We launched Chiefbase with three lead magnets, each designed to validate a different hypothesis:

- Chief on WhatsApp: the agent your ops team can actually text. WhatsApp because it’s universal, frictionless, sits on every phone, and lives in the exact context-rich surface where recruiters already operate. No install, no login, no walled garden.

- The Agentic Gap: a 60-second side-by-side comparison: single-shot LLM versus an agentic workflow on a real job order. Validates whether the thesis lands — “your AI is generic, your agency isn’t” — before anyone has to take our word for it.

- Couch-to-5K for AI: a free 10-day course. Replace one recurring recruitment task per day, five minutes each. Validates whether agencies will commit twenty-five minutes a week to actually changing their workflow, which is the leading indicator for whether they’ll commit to a Pilot later.

Three surfaces. Three different bets. Three different signals coming in.

The combined cost to ship was a fraction of one mid-sized SaaS build. If one of them gets disproportionate engagement, we’ll know which hypothesis was the real one. None of them required us to guess.

Why we chose Hermes over OpenClaw for our agent stack

The same lens applies to the technology choices underneath.

Our first attempt at a multi-agent system was on OpenClaw with three coordinated instances.

It didn’t land. The agents felt dumb: we’d give them a clear instruction, two turns later they’d be hallucinating their way back to a generic answer.

We were constantly reminding them of context they should have remembered. Anyone who’s built a multi-agent system this year knows the failure mode: the agents technically work but they don’t collaborate.

We tried to fix it with our own harness. We open-sourced the result at github.com/camplight/orgops — an event-based protocol that lets two independently-running agents communicate without binary-encoded RPC awkwardness.

Worth a read if you’re building multi-agent systems and the existing primitives are letting you down. We learned a lot, didn’t ship a product on it — building infrastructure for infrastructure’s sake is its own version of the build-and-pray trap.

We researched more agent frameworks with the typical build-vs-buy dilemma. The final decision came down to Hermes vs OpenClaw.

What landed for us was Hermes: agent-loop ergonomics that behave the way you actually want, plus a persistent memory layer so the bot doesn’t re-introduce itself every turn or forget who Dany is between conversations.

We used exe.dev and Digital Ocean for two deployments: one internal to Camplight, one public-facing. Both run on Honcho for persistent agent memory.

The internal proof point: we’ve been running this same stack on Camplight’s own AI-native workflows for months. CV screening, CV reformatting into a partner format, automated review of engineering homework assignments. The same agent ergonomics that power Chief on WhatsApp have been compounding on real internal work. Chiefbase is the externalized, packaged version of tools we already use every day.

What we’re learning from our AI validation experiments

Chief on WhatsApp is live. So are the other two lead magnets. The first conversations with recruiters are happening. We don’t have a polished case study yet and that’s the point.

What we’ll tell you over the coming weeks: which of the three pain points has actual commercial pull, what the ICP for a recruiter agent looks like in practice, whether WhatsApp is the right surface or just the cheapest one, and which of the three lead magnets is doing the heavy lifting on demand validation. We’ll be wrong about some things. We’ll publish what we learn either way.

If you’re sitting on a new product idea

The methodology generalizes. If you’re an established business with a new-product idea and you’re about to commit a quarter to a build-and-pray cycle: don’t.

Talk to five people first. Put the cheapest possible thing — ideally three different cheap things — into their hands. See what they actually do with it. That is the post-mortem you don’t have to write, because the bad version of the product never existed.

And if you came here for Chief specifically: scroll back up. Try the live demo. Or jump to The Agentic Gap and Couch-to-5K. We built these for you to use, not to read about.

Sitting on a new AI product idea?

We help established businesses validate new product bets the un-SaaS way. Cheapest possible surface, real customer conversations, three parallel experiments instead of one big build. If that loop sounds like what your team needs, let’s talk.

FAQ

What does “validation-first” mean for a new AI product?

Validation-first means putting cheap, customer-facing surfaces in front of real users before committing to a full build. Instead of one polished MVP, you ship multiple low-cost lead magnets — each designed to test a different hypothesis about who the customer is and what they want. Engagement data tells you which hypothesis was correct before you’ve spent a quarter building the wrong thing.

Why use multiple lead magnets instead of a single MVP?

A single MVP forces you to commit to one hypothesis about the customer and the wedge. Multiple lead magnets let you run parallel bets — a live demo for the operator who wants to feel the product, an interactive comparison for the buyer who needs proof of the thesis, a short course for the prospect who needs onboarding. The one that gets disproportionate engagement is the real wedge. The combined cost of three lead magnets is still a fraction of one mid-sized SaaS build.

How do you choose between agent frameworks like Hermes and OpenClaw?

We tried OpenClaw first and ran into the classic multi-agent failure mode: the agents worked individually but didn’t collaborate. We open-sourced our own harness (orgops) as an experiment, learned what good event-based protocols look like, then adopted Hermes because it removed the most friction from our day-to-day work — agent-loop ergonomics plus a persistent memory layer (Honcho) so the bot doesn’t re-introduce itself every turn. The choice was less about which framework is “best” in the abstract and more about which one let us iterate fastest on the actual conversation.

Camplight is a worker-owned software cooperative founded in 2012. We help B2B teams validate, build, and scale digital products and ventures — from zero to $10M+ ARR. Our validation-first approach has driven a 95% client satisfaction rate across 300+ delivered projects in FinTech, HealthTech, EdTech, and beyond.